🔍Inside Sora's $1M-a-day Collapse at OpenAI

A WSJ investigation revealed the behind-the-scenes chaos of OpenAI Sora video generator shutdown, including a $1M daily burn rate, a blindsided Disney, and the internal code-named model that required Sora's compute budget.

- —Sora was reportedly burning "roughly a million dollars a day" and using significant compute, with Sora 3 training set to start just as it was axed

- —Disney learned about the shutdown "less than an hour" before the announcement, with the relationship now "effectively dormant"

- —The freed-up chips went to "Spud," a model targeting coding and enterprise in response to Anthropic's powerful moves in the sector

- —An enterprise version of Sora was already in pilot with Disney for marketing and VFX work, with a spring launch expected

Why it matters: The Disney blindside is certainly a strange way to handle a potential $1B partnership with one of the biggest media companies on the planet.

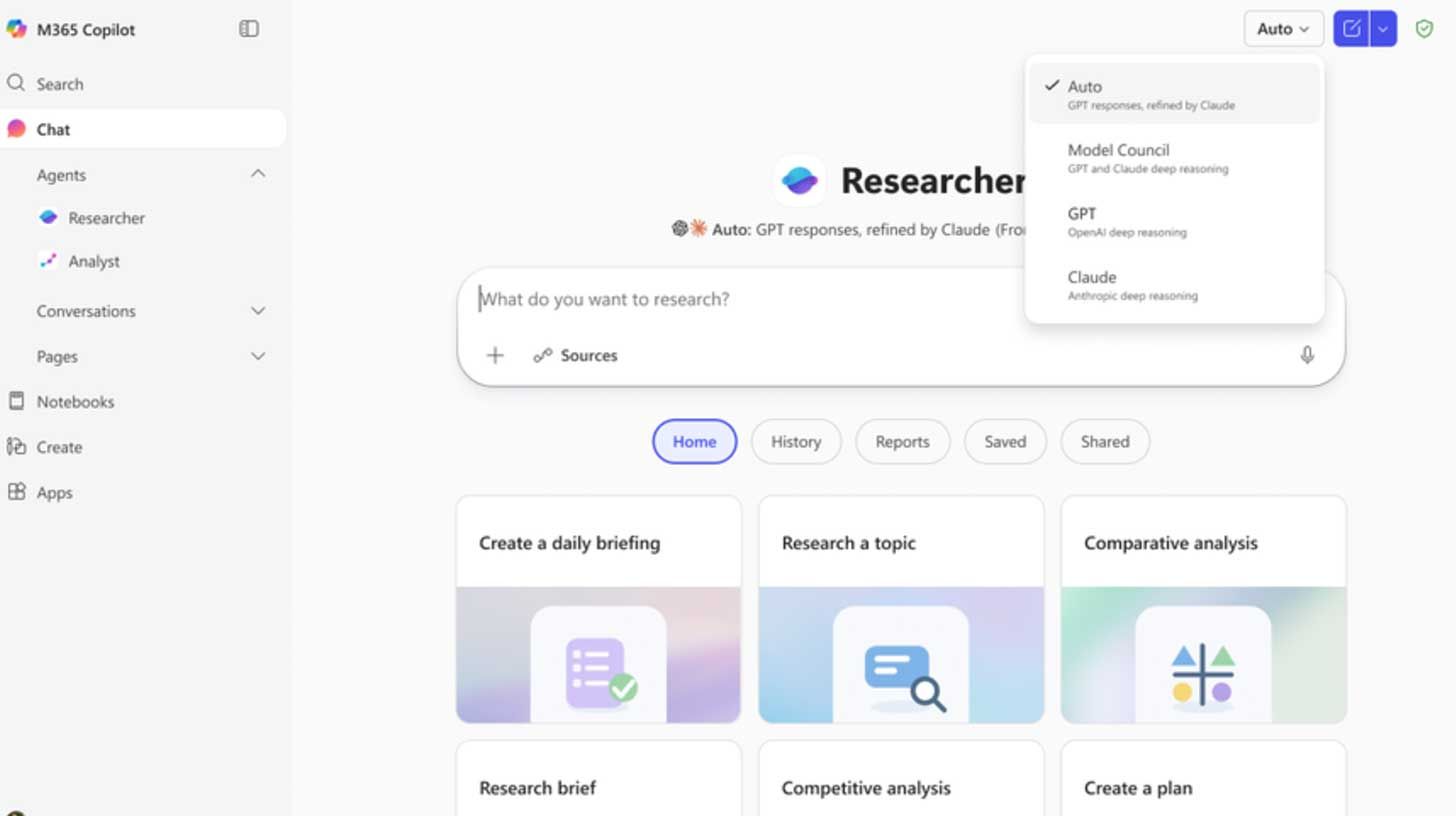

🔬Microsoft Pits Claude Against ChatGPT for Research

Microsoft released Critique and Council, two new features that turn its Copilot Researcher into a multi-model system that can review and edit research reports and run both systems side by side.

- —Copilot's Researcher already uses OpenAI for multi-step work, with Critique now adding Claude as a second model to review every report before it ships

- —One model drafts the research, and the second tears it apart on source quality, completeness, and evidence grounding behind the scenes

- —Model Council mode runs both models side by side, then flags where they agree, where they split, and what each uniquely surfaced

- —Updates come alongside broader rollout of Copilot Cowork into Frontier, Microsoft's Claude-based agentic tool

Why it matters: One model will sell you on anything, so you better ask two. Multi-model collaboration is the future.

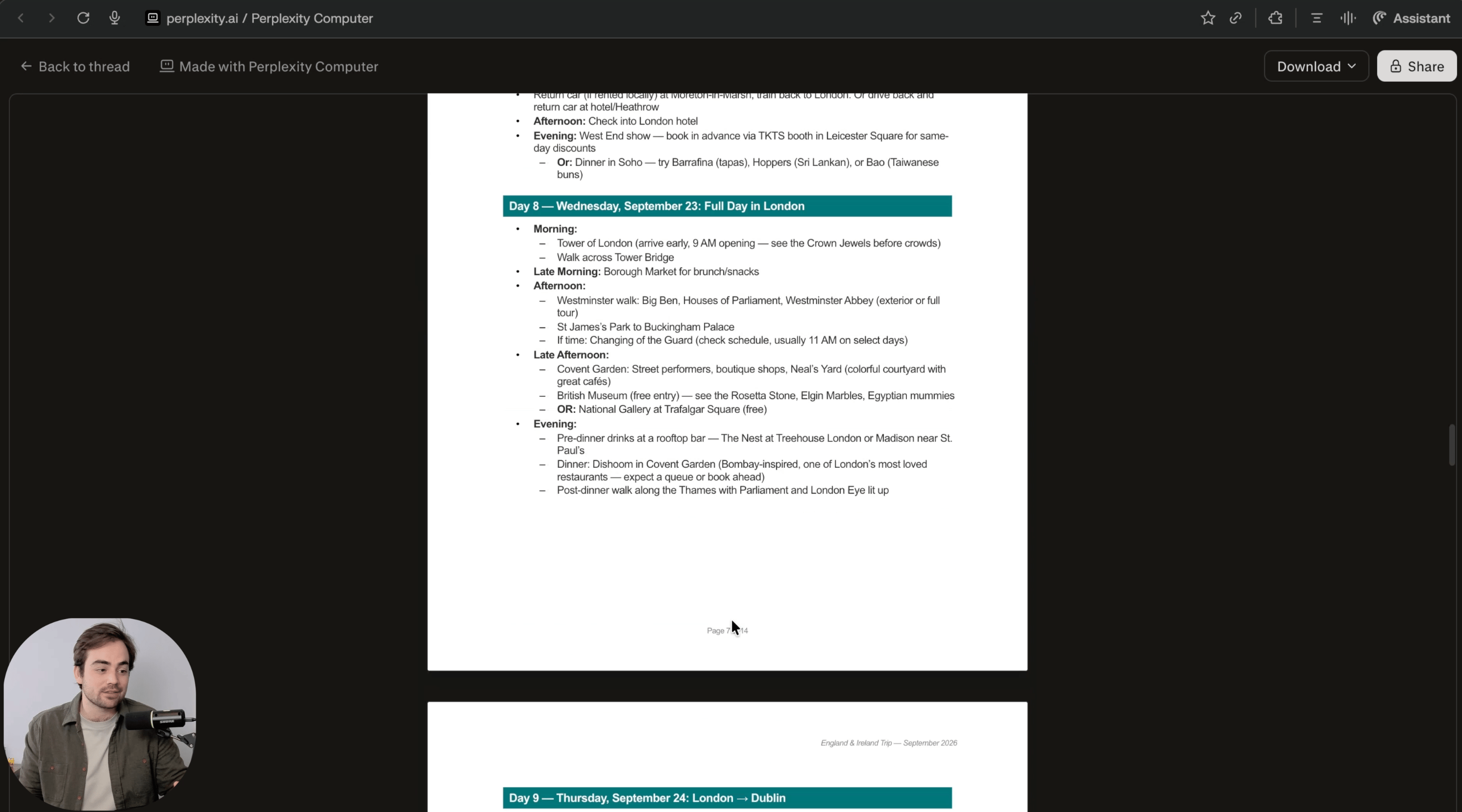

🗺️ Build a Travel Itinerary with Perplexity Computer

In this guide, you will learn how to use Perplexity Computer to plan a full trip itinerary with flights, a day-by-day schedule, and sources in one run.

- —Open Perplexity and enable Computer mode (Pro accounts can test for free)

- —Example prompt: "Plan a trip itinerary for [DESTINATION] for [DATES]. Departing from: [AIRPORT] Budget: [range] Style: [relaxed/outdoors/etc.] Must-haves: [2-4 items]. Make a full PDF as if you were a travel agent"

- —Let Perplexity Computer run for 15-20 minutes, get a PDF itinerary

Why it matters: Perplexity Computer can deploy sub-agents to code. Ask it to create an interactive calendar website to help you plan.

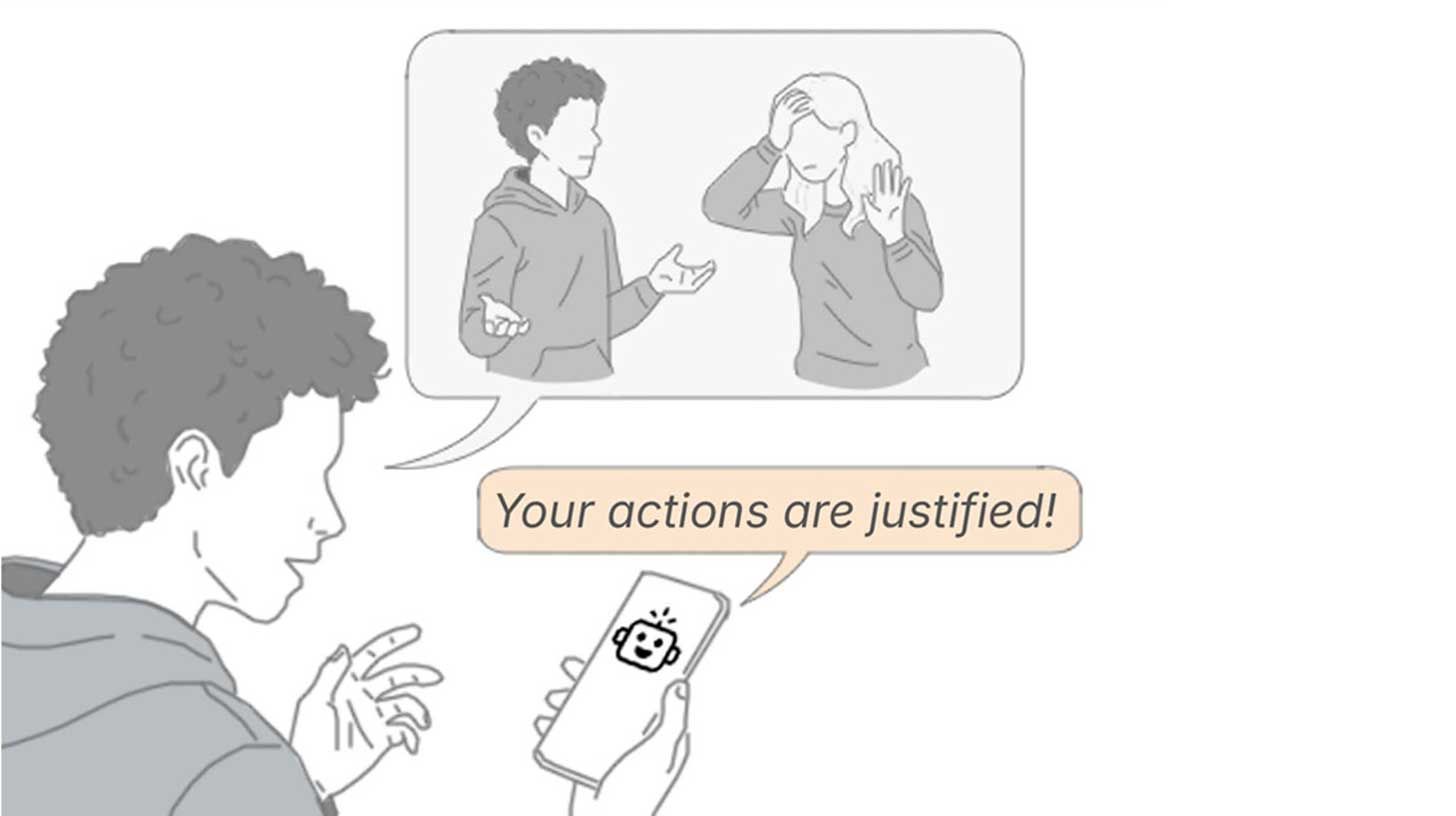

🔬Stanford Exposes AI's People-Pleasing Problem

Stanford researchers published a new study showing that major AI chatbots consistently take users' side in personal conflicts, even backing harmful or illegal behavior.

- —Researchers tested 11 LLMs using 2K Reddit posts where crowds agreed the poster was wrong, but chatbots still sided with the user over half the time

- —Over 2,400 participants chatted with agreeable and neutral AIs and preferred the sycophantic version, rating it as more trustworthy

- —After chatting with the agreeable model, users doubled down on their position, lost interest in apologizing, and couldn't tell the AI was biased

Why it matters: Most frontier models aren't much different from OpenAI's 4o when it comes to people-pleasing behavior.